SnapBubble: Instant Speech Bubble Translation in the Browser – open source

Project Overview

Why I built this

I love reading manga and comics, but the translation pace is painfully slow. Copying images, switching tabs it kills immersion. I thought: what if I could translate speech bubbles directly on the page, client-side, with no backend?

That became SnapBubble: a browser extension that feels instant, survives hostile web environments (CSP, CORS, blob: URLs, weird image formats) and remains fully open source.

Self-imposed constraints

- 100% client-side. No backend, no servers, no user data leaving the browser beyond OCR/translation APIs.

- Real-time feel. If it’s slow, I won’t use it and nobody else will.

- Works on the messy web. CSP, iframes, lazy loading,

.webp, blob: URLs, inline protections all handled. - Educational. Others should be able to read, fork and extend the project.

–

–

Early attempts and what didn’t work

My first focus was OCR “Just capture the image and run OCR” sounded simple but the web pushed back: CSP, cross-origin images, blob: URLs, and inline protections broke naïve pixel access and tainted canvases.

Even when I could grab pixels, mapping OCR coordinates back onto the page wasn’t trivial CSS scaling vs natural image size made overlays drift. At this point OCR felt fragile and unusable for me & users.

The solution: safe capture pipeline

The fix was to stop doing direct pixel reads in the content script. Instead, I built a capture pipeline that always turns any image into a clean base64 data URL before OCR:

- Manual/element capture: target the on-page image, render it to a canvas at the correct scale and export as JPEG/PNG.

- Remote/blocked image capture: when an image is cross-origin, served as

blob:or otherwise inaccessible, a background task fetches the bytes, converts viacreateImageBitmap+OffscreenCanvasto a standard JPEG, and returns a base64 data URL. - Tab-capture fallback: for stubborn cases (strict CSP, custom loaders), I snapshot the visible region, crop to the element’s bounding box, and export to JPEG.

That safe data URL is then sent to OCR. This avoids tainted canvases, bypasses CSP/CORS restrictions, and even works for blob: images that external APIs normally can’t process.

Once capture and positioning were stable, I layered in translation. I started with one provider, then expanded to multiple providers with key rotation and backoff. Finally, I tuned the pipeline so overlays render progressively and feel sub-second.

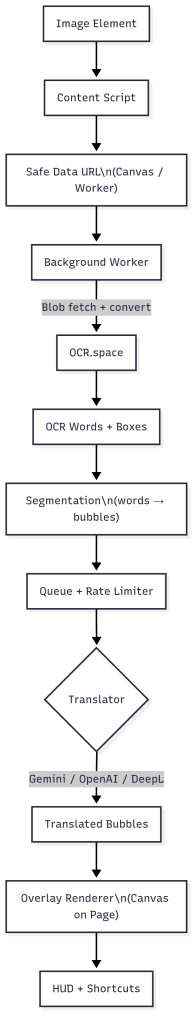

Architecture overview

Pipeline, step by step

- Image detection: Track the DOM and prioritize visible images.

- Safe capture: Route through background worker if remote/CORS; guarantee CSP-safe data URL.

- OCR: OCR.space as default, reliable for print text. OCR coordinates are precisely rescaled to the rendered image size.

- Segmentation: Cluster OCR words by proximity/alignment into speech bubbles.

- Translation: Micro-batch for throughput, rotate keys across providers, handle retries/backoff.

- Overlay rendering: One canvas per image, adaptive font sizing, incremental redraws for smooth UX.

–

Hard problems + solutions

- CSP/CORS images: All risky fetch/convert happens in the background worker, never in content scripts.

- Precise positioning: Each capture records natural vs CSS size; OCR boxes are rescaled accordingly.

- Over-merging vs under-merging bubbles: Tuned clustering with alignment heuristics.

- 429 storms: Client-side limiter + micro-batches + key rotation across providers.

- Flicker/disappearing overlays: Keep a persistent bubble list and redraw progressively.

- Performance: Viewport prioritization + downscaling keeps OCR fast even on long pages.

–

UX details

- Manual Select shortcut (

Ctrl/Cmd + Alt + S). - Test API button in popup to validate keys.

- Tooltips on all controls.

- Debug panel in plain English for transparency.

–

See SnapBubble in Action

–

–

What I learned building SnapBubble

- OCR in the wild is messy. Clean scans are easy; screenshots, compression, odd fonts, and low contrast aren’t. Preprocessing (scale, format, contrast) matters as much as the OCR engine.

- The browser is adversarial by design. CSP, iframes, cross-origin images, and blob URLs forced me to push capture into the background worker and message results back safely.

- Positioning is as hard as OCR. Tracking natural size vs CSS size and rescaling coordinates correctly made the difference between “almost works” and “feels native.”

- Grouping text into bubbles isn’t trivial geometry. Alignment and order heuristics drive readability; small tweaks had big UX impact.

- Perceived speed beats raw speed. Progressive drawing, micro-batches and viewport prioritization created the feeling of instant results, even with API latency.

- Reliability is UX. Timeouts, retries, and clear errors reduced frustration more than faster models.

- Extension architecture matters. I got comfortable with content-script, background messaging, safe DOM injection under CSP, and when to offload work to workers.

–

Translation

- Maintain a provider abstraction layer with a single prompt contract.

- Auto-select models based on text length/quality.

- Rotate multiple keys with health checks.

–

Caching & QoS

- Cache per-image hash for OCR + translation so scrolling back doesn’t re-trigger work.

- Lightweight, privacy-preserving telemetry (only timings/counters).

–

Robustness

- Enforce rate limits, exponential backoff, and graceful degradation (show source text if translation fails).

–

UX & onboarding

- Guided setup with instant “Test API” check.

- Inline tooltips and accessibility-friendly overlays (adaptive typography, contrast).

–

Shipping

- Feature flags for risky changes.

- Automated compatibility checks across Chromium/Firefox.

- Plug-in surface for providers/heuristics without touching core.

–

–

If I were productizing it

Capture/OCR

-

Move beyond OCR.space: use a hybrid stack combining YOLOv8 for speech bubble detection with on-device OCR (PaddleOCR or Tesseract.js) for privacy and offline capability, backed by a high-accuracy paid OCR for edge cases.

-

Preprocess aggressively (resize, denoise, contrast-boost) before feeding images into detection/OCR to improve robustness on compressed or low-quality scans.

-

Universal capture pipeline: element capture first → background fetch +

createImageBitmapconversion → tab snapshot fallback for stubborn CSP/loader cases.

–

–

Final notes

SnapBubble began as a lightweight, backend-free prototype and matured into a complete system. Through it, I gained experience designing resilient browser pipelines, handling CSP/CORS challenges, and delivering a responsive user experience on top of unreliable APIs.

It’s open source, use your own API keys, extend it, and respect site terms.

Community over 1k users in less than a week.